The Memory That Mining Forgot

Why the most repeated claim about Bitcoin's proof-of-work — that it is "memoryless" — is, at the protocol level, false

There is a particular species of intellectual error that recurs across disciplines with the reliability of a metronome. It consists in observing a true property of a component, then attributing that property to the whole system in which the component is embedded — without pausing to ask whether the composition preserves the property. Physicists do not make this mistake with thermodynamics. Engineers do not make it with load-bearing structures. Economists, for reasons that would reward a sociological study of their own, sometimes do.

The claim under examination is this: proof-of-work mining is memoryless. It is a claim made in some of the most influential economic treatments of Bitcoin’s security. It appears in respected venues. It has been repeated by serious scholars. It forms, in at least one prominent case, the basis of a theoretical contrast between proof-of-work and proof-of-stake consensus. And, at the level at which it is typically asserted — the level of the protocol and the economic environment — it is demonstrably false.

One might be forgiven for thinking that a claim repeated across a decade of blockchain economics had been formally verified at some point. One would be wrong. The claim was inherited, not proved. It was passed from author to author like an heirloom whose provenance no one thought to check — until, at last, someone did. What follows is the substance of that correction.

What Memorylessness Actually Is

Precision matters here, because the entire argument turns on a definition. The memoryless property is one of the most precisely characterised objects in probability theory. A non-negative random variable X is memoryless if and only if the probability that it exceeds t + s, given that it has already exceeded s, equals the probability that it exceeds t — for all non-negative t and s. Among continuous distributions, only the exponential satisfies this condition. Among discrete distributions, only the geometric does so. This is a theorem, not a stylistic choice.

The property has an intuitive meaning that everyone who has waited for a bus understands: if the waiting time is memoryless, then having already waited ten minutes tells you nothing about how much longer you will wait. The process has no memory of how long it has been running. Every moment is, probabilistically, a fresh start.

Now, a single hash trial in Bitcoin mining — a single evaluation of the SHA-256 function against a fixed target — is indeed memoryless. Each trial is independent and identically distributed under the random-oracle idealisation. Having failed a million times tells you nothing about the million-and-first. The waiting time to the first success is geometric (in discrete trials) or exponential (in the continuous-time approximation), and both of these distributions are, by theorem, the unique distributions that satisfy the memoryless property.

This is true, well-known, and not in dispute.

What is in dispute is the inference that follows. The hash trial is a component of the Nakamoto protocol. The Nakamoto protocol is a system that composes the hash trial with feedback mechanisms, maturity rules, time-varying payoffs, and chain-selection logic. The question is whether memorylessness — which is a property of the component — survives this composition. It does not.

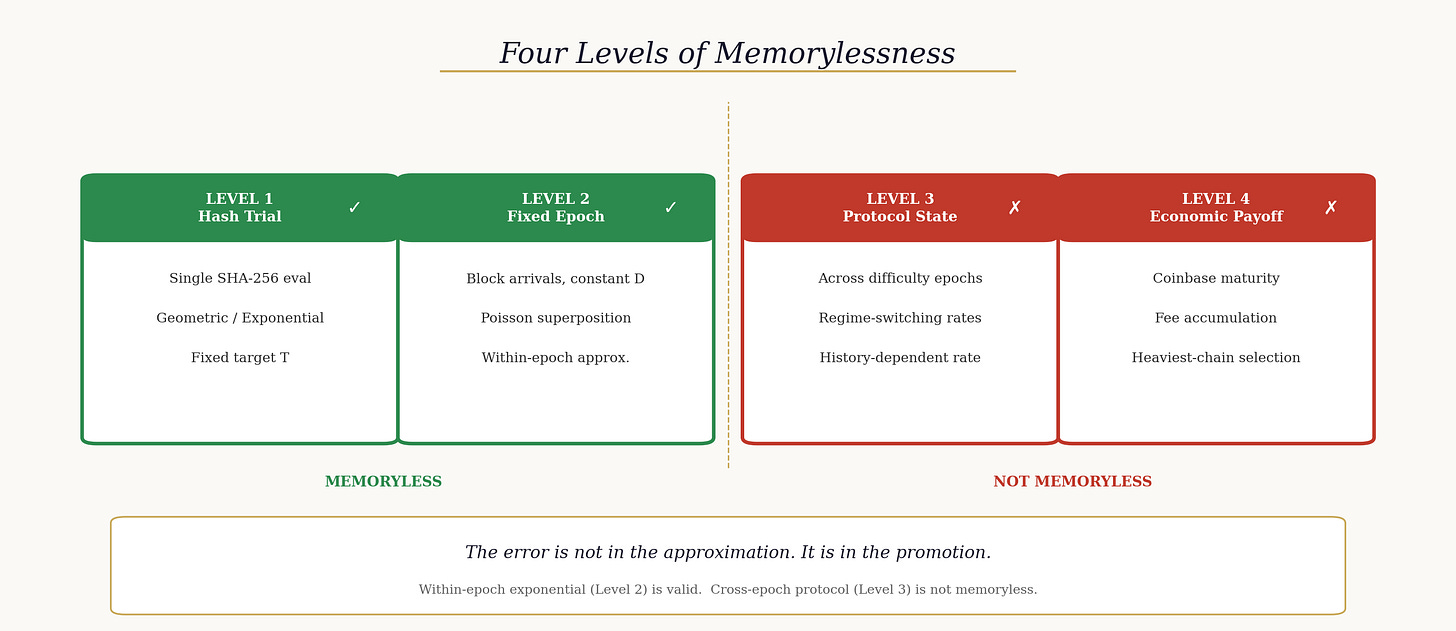

Four Levels, Two Verdicts

The source of the confusion is a failure to distinguish the object to which memorylessness is being attributed. The phrase “mining is memoryless” is used without consistently specifying what “mining” refers to. There are four levels at which the claim might be made, and the taxonomy is worth internalising because it converts a vague slogan into a precise set of propositions — some true, some false.

Level 1 is the hash trial itself: the number of SHA-256 evaluations until the first valid hash, given a fixed target. This is memoryless by the characterisation theorem. No argument there.

Level 2 is the block-arrival process under fixed difficulty: the inter-block arrival times during a single difficulty epoch, in which the target and hence the per-trial success probability are held constant. Under the standard Poisson approximation, this is an exponential process. It is memoryless by construction — because it holds the parameters fixed by assumption. No argument there either.

Level 3 is the protocol-state process across epochs: the stochastic process that maps calendar time to the full protocol state, including difficulty, block height, chain structure, and mempool contents. This process spans multiple difficulty epochs and incorporates the retargeting mechanism.

Level 4 is the realised economic payoff process: the stochastic process that maps calendar time to the miner’s actual economic outcomes — whether discovered blocks remain canonical, whether coinbase rewards have matured, and the value of fees collected.

The verdict: Levels 1 and 2 are memoryless. Levels 3 and 4 are not.

Using Level 2 as a local approximation within a fixed-difficulty epoch is defensible modelling practice. The error arises when this local property is elevated — without qualification — into a characterisation of the Nakamoto protocol as an economic and probabilistic system. It is the difference between saying “the tiles are flat” and “the dome is flat.” The tiles may well be flat. The dome, manifestly, curves.

The Decisive Mechanism: Difficulty Adjustment

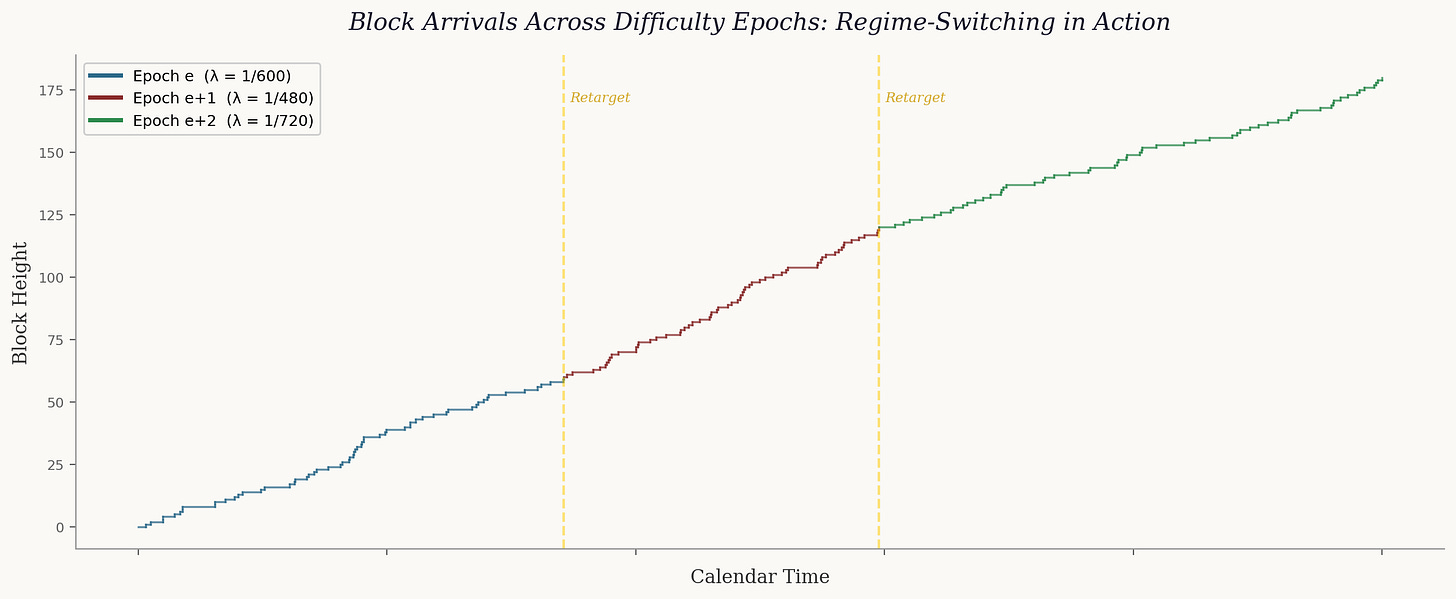

The core of the matter turns on a single mechanism: the difficulty adjustment. Every 2,016 blocks — roughly every two weeks — the Bitcoin protocol updates its difficulty parameter as a deterministic function of the realised duration of the preceding epoch. If blocks arrived too quickly, difficulty increases. If too slowly, it decreases. The formula is public and mechanical: the new difficulty equals the old difficulty multiplied by the ratio of the target epoch duration to the actual epoch duration, subject to a clamping constraint.

This means that the arrival rate of blocks in epoch e+1 depends on the block-arrival times that were realised during epoch e. The rate parameter is not fixed. It is a function of history. And that is precisely what memorylessness forbids.

Consider a waiting time that spans an epoch boundary. You are waiting for a block. The current epoch ends. The difficulty adjusts. The rate at which blocks will now arrive is different from the rate at which they were arriving a moment ago — and the new rate was determined by the block-arrival history of the epoch that just concluded. The conditional probability of waiting another t seconds, given that you have already waited s seconds, now depends on what happened during those s seconds. The memoryless property is violated.

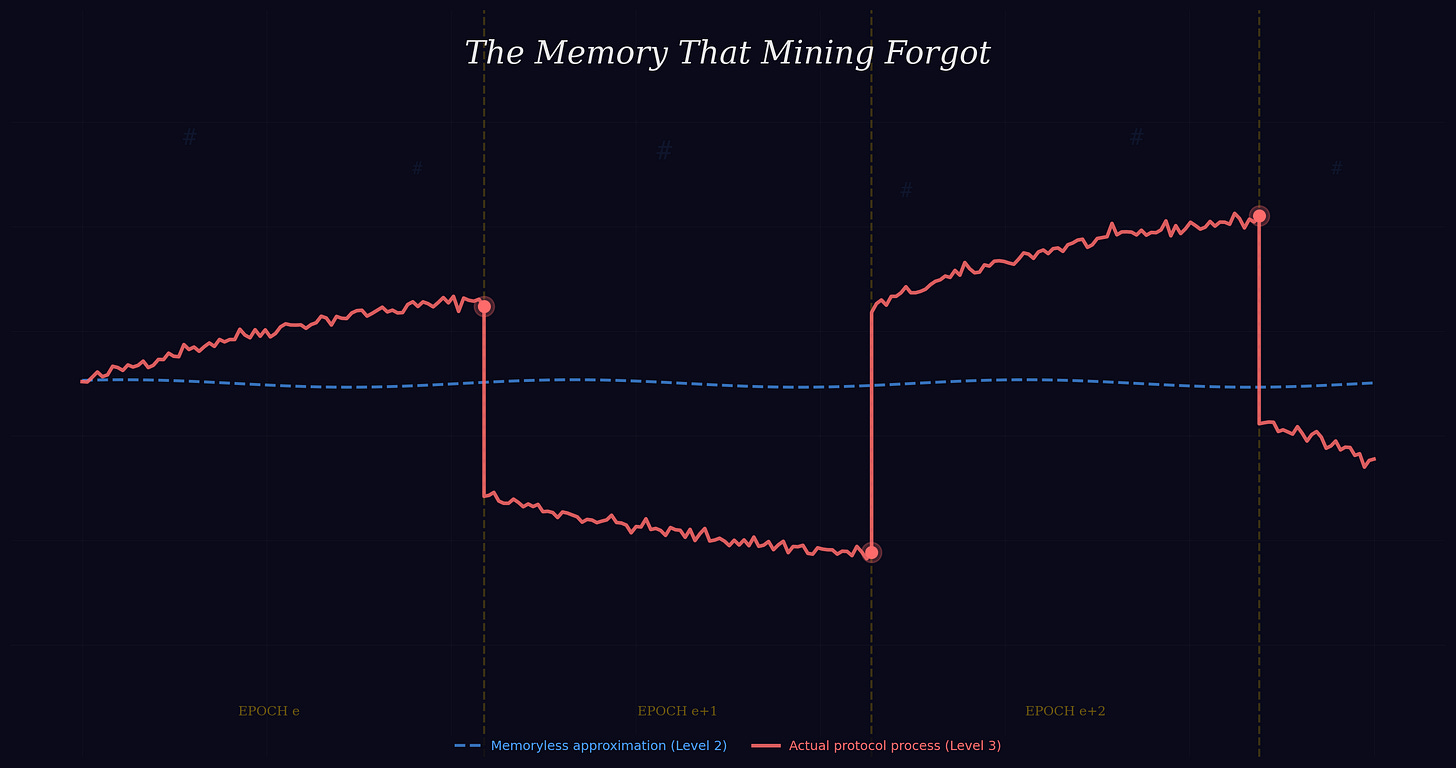

The structure is a piecewise-exponential process with stochastic rate changes at epoch boundaries. Within each epoch, the process is approximately exponential. At the boundary, the rate parameter is updated as a deterministic function of the realised epoch duration. This composite object is a regime-switching process. Regime-switching exponentials are not memoryless. This is not a conjecture; it is a consequence of the mathematical characterisation of the exponential as the unique continuous memoryless distribution.

Put the point concretely. Suppose epoch e happened to produce blocks faster than the ten-minute target — hash rate surged, perhaps, from new mining hardware coming online. The protocol responds by increasing difficulty for epoch e+1, which lowers the per-trial success probability, which reduces the arrival rate of blocks. The rate at which blocks arrive tomorrow is a function of how fast they arrived yesterday. The past is inscribed into the future, mechanically and unavoidably. A process whose future rate depends on its own realised history is, by definition, not memoryless. This is not a philosophical subtlety; it is a mathematical consequence of how the protocol was built.

Nor is the violation merely formal. The difficulty adjustment operates approximately every two weeks. Any economic analysis of sufficient duration — any analysis of prolonged attacks, long-run mining investment, or security under changing network conditions — will span at least one retarget boundary, and therefore operate in a regime where the memoryless approximation fails at the protocol level. To treat a fortnightly feedback mechanism as negligible in an economic model is to declare the medium run irrelevant by assumption. That is a bold claim. It deserves to be stated explicitly, not smuggled in under the label of “memorylessness.”

Three Reinforcing Mechanisms

Difficulty adjustment alone is sufficient to establish the result. But the Nakamoto protocol contains three further mechanisms that independently make the miner’s realised economic environment history-dependent. These concern distinct economic objects: the prize, the payoff realisation, and the finality of the discovered block.

Coinbase maturity. The coinbase transaction in a block at height h cannot be spent until the chain extends to height h + 100. A miner who discovers a block does not receive an immediate and unconditional reward. The reward is conditional on the block surviving one hundred confirmations without being reorganised out of the canonical chain. The probability that the reward is ultimately realised depends on the confirmation depth accumulated so far and on the fork state at the time of assessment. A block with fifty confirmations and no competing fork is, by any reasonable model, far more likely to pay out than a block with five confirmations and a competing fork of depth three. The maturity process is therefore history-dependent: the conditional probability of survival over the next interval depends on the realised history of chain extension. The process has memory. It was designed to have memory. The protocol’s architects were not confused on this point; only its subsequent economic theorists were.

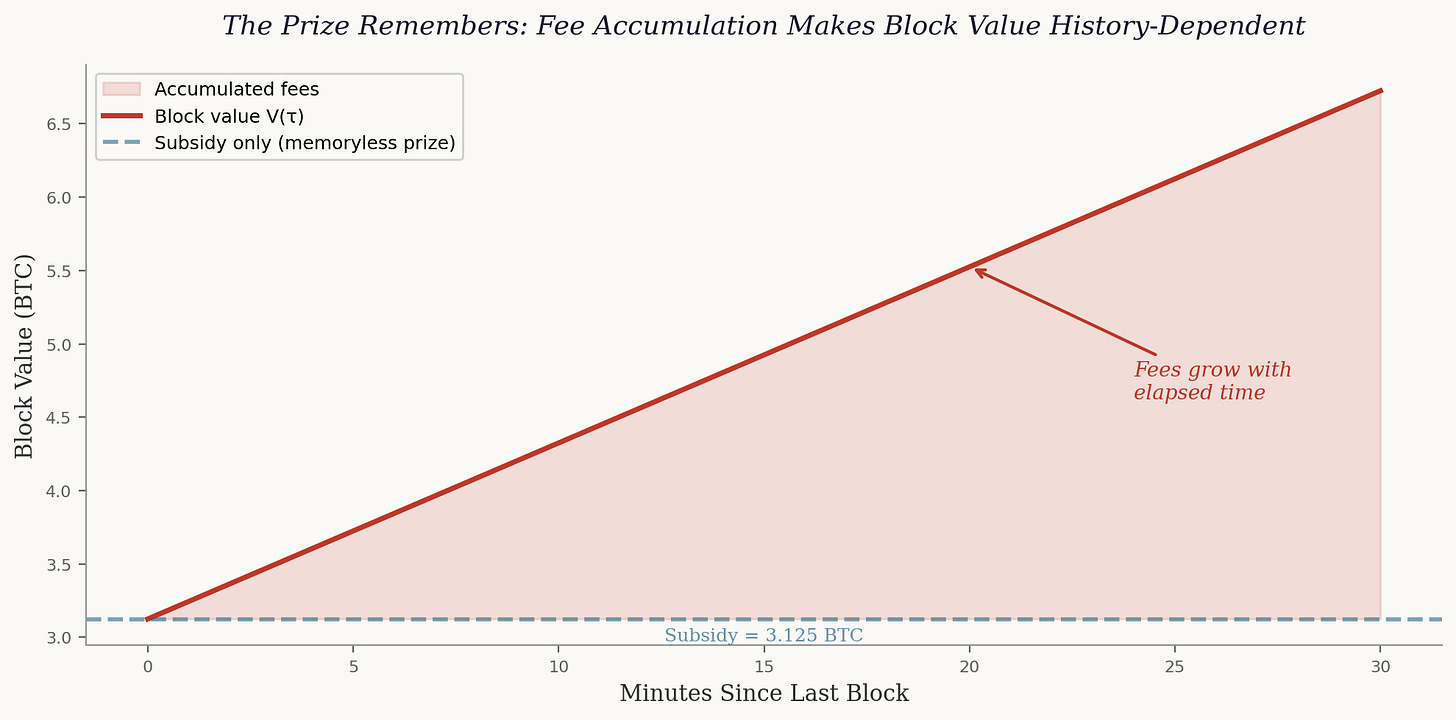

Fee accumulation. The value of the next block is not constant. It is the subsidy plus the fees that have accumulated in the mempool since the last block was found. If no block has been discovered for twenty minutes rather than ten, the prize is larger — by exactly the fees that arrived during those additional ten minutes. The prize conditional on no block having been found by time s exceeds the prize at the same residual time from a fresh start. Even if the arrival rate were constant (which, as we have seen, it is not across epochs), the value of what is being competed for is not. The economic environment grows richer the longer the current inter-block interval lasts. A lottery that changes its jackpot based on how long it has been running is not, in any economically meaningful sense, memoryless.

Heaviest-chain selection. Whether a block is canonical — whether it counts — depends on all blocks discovered after it. A block that was part of the longest chain at time t may not be part of the longest chain at time t + 1, if a competing fork overtakes it. The probability of reorganisation after k confirmations is a decreasing function of k, which is itself a random variable determined by the block arrivals since the block was discovered. The canonical-status process depends on realised history. This is not an exotic observation; it is the foundation of the entire confirmation-counting security model that every user of Bitcoin relies upon. When someone waits for six confirmations before treating a payment as final, they are relying on the fact that the protocol’s finality process has memory — that deeper burial means greater security. The very practice of counting confirmations is an acknowledgement that the system remembers.

Each of these three mechanisms is independently sufficient to make the realised economic payoff process non-memoryless. Together with the difficulty-adjustment result, they establish that the Nakamoto protocol fails the memoryless property at every level above the individual hash.

The Error and Its Consequences

The local approximation is, within its proper scope, perfectly sound. The Poisson model of block arrivals within a fixed-difficulty epoch is a good model for the purpose for which it was designed. To the extent that an analysis is understood as applying within a fixed-difficulty regime, it is using Level-2 memorylessness, which holds by construction. That is not the object of criticism. Not every use of Poisson-style mining language is a mistake.

The error arises in the unqualified generalisation. When a prominent economic treatment of Bitcoin’s security draws an explicit contrast between proof-of-work (characterised as memoryless) and proof-of-stake (characterised as not memoryless), the relevant object is not the hash trial. It is the protocol-level economic environment. And at that level, the characterisation is incorrect. The difficulty adjustment creates a feedback loop from past block production to future success probabilities that introduces path dependence at the protocol level. This is different in mechanism from proof-of-stake lock-up, but it is not the absence of history dependence that the formulation implies.

This matters because theoretical conclusions depend on the properties attributed to the system. If you assume the economic environment is memoryless when it is not, you may derive results that hold only under an idealisation that the actual protocol does not satisfy. A constant-difficulty environment is a modelling choice, and as a modelling choice it may be defensible for certain purposes. But it should be stated as a modelling choice — not treated as a neutral description of the protocol’s probabilistic character.

The distinction is between a simplifying assumption and a claimed property. One is honest; the other is, at best, careless. And in economic theory, carelessness about assumptions has a tendency to metastasise into carelessness about conclusions. The history of economics is littered with models whose assumptions were acknowledged to be “simplifying” by their authors and then treated as “approximately true” by their successors. The memoryless characterisation of proof-of-work is following the same trajectory. It began as a tractable modelling choice. It is ending as an unexamined article of faith. The correction aims to arrest that progression before it reaches its terminal stage — which, in intellectual history, is the stage at which everyone knows the claim is false but no one can be bothered to stop citing it.

What the Protocol Actually Is

If the Nakamoto protocol is not a memoryless process, then what is it? The answer, once stated, is not difficult to internalise: it is a piecewise-exponential process with endogenous regime switching.

Within each difficulty epoch, inter-block times are approximately exponential. At epoch boundaries, the rate parameter changes as a deterministic function of the epoch’s block-arrival history. Overlaid on this is a payoff process that makes block value time-dependent, a maturity rule that conditions payoff realisation on future chain evolution, and a chain-selection rule that makes block validity a function of all subsequent mining activity.

This is not a complicated characterisation. It is, in fact, a rather natural one — once you stop trying to force the protocol into a single distributional mould. The protocol was designed by an engineer, not by a probability theorist seeking distributional elegance, and it has the pragmatic layering that good engineering tends to produce. The difficulty adjustment is a feedback controller. The coinbase maturity rule is a vesting schedule. The fee accumulation is a time-varying prize. The heaviest-chain rule is a retrospective validation mechanism. Each of these is a standard construction in its respective domain. Together, they produce a process that is far richer than any single exponential distribution can accommodate.

The methodological consequence is straightforward: economic models of proof-of-work mining must specify the level at which memorylessness is being invoked. If the model uses exponential arrivals within a single epoch as a tractable approximation — say so. If the analysis spans multiple epochs, or concerns payoff realisation, or evaluates reorganisation risk — do not assume memorylessness at the protocol level, because it does not hold there.

The Broader Lesson

There is a pattern in the economic analysis of engineered systems that deserves more attention than it receives. A technically precise property of a low-level component is observed, named, and then — through repetition and imprecise citation — promoted to the status of a system-level characterisation. The promotion is never formally justified. It simply happens, through the accumulated weight of one treatment citing another, each treating the claim as slightly more established than the source warranted.

The memoryless characterisation of proof-of-work mining is an instance of this pattern. The hash trial is memoryless. This is a theorem. “Mining is memoryless” is a slogan derived from that theorem. And “the Nakamoto protocol is memoryless” is a false claim derived from that slogan. The inferential chain is: theorem, then slogan, then error. Each step is understandable. The final destination is wrong.

This should not occasion despair. It should occasion precision. The within-epoch exponential approximation is a useful tool. Use it where it applies. Acknowledge its limits where it does not. Do not confuse a local approximation for a global characterisation, and do not build theoretical edifices on properties that the system does not possess.

In matters of formal modelling, the level of abstraction — not the elegance of the algebra — is the vital thing. A beautifully derived result from a false premise is still a false conclusion, no matter how pleasing the derivation. The algebra may sparkle. The premise must hold.

The broader implication for blockchain economics is that the systems under analysis are engineered artefacts with specific, documented mechanisms. They are not stylised abstractions waiting to be interpreted. The difficulty adjustment is written in code. The coinbase maturity rule is enforced by every validating node. The fee accumulation is a function of mempool dynamics that varies in real time. These are not optional features of a theoretical model. They are constitutive elements of the system being modelled. To ignore them is not to simplify; it is to describe a different system entirely.

And when the premise is that the Nakamoto protocol is memoryless — the premise does not hold. The protocol remembers. It was designed to.

Another great read! Sorry I'm behind in reading everything you write, I can't keep up. Been busy squashing bugs LOL! Phone to Phone connection success today! :-)

I am curious if you have entertained utilizing the blockchain protocol for cybernetics, neural networks or consciousness studies?

The unbounded-horizon Markov-perfect equilibrium your 2025 Thesis, seems to me, to represent a process form of neural maturing which the attractor state become self-sustained, not unlike human development.

If Synthetic Collective Intelligence (SCI) has any potential of occurring, it will be through your creation.

It is my suspicion that consciousness/intelligence (in the Michael Levin definition) will emerge from the eventual mass implementation of your Blockchain.