Why Transaction Throughput Determines How Long Bitcoin’s Security Model Holds

The mathematics of mining memorylessness has an expiry date — and it depends on how many transactions you process.

Most people who follow Bitcoin have heard that mining is “memoryless.” The idea is simple: every hash computation is an independent lottery ticket. It does not matter how many hashes you have already tried — the probability of the next hash being valid is exactly the same as the first. This is why mining is modelled as a Poisson process, why block arrivals are treated as exponentially distributed, and why the entire economic security analysis of proof-of-work rests on the mathematics of memoryless stochastic processes. It is a limited approximation that has flaws.

This assumption underwrites a very large body of academic work. Eric Budish’s 2025 paper in the Quarterly Journal of Economics — the most influential economic treatment of Bitcoin’s security — explicitly states that “Nakamoto trust is memoryless.” The selfish-mining literature, the double-spending probability calculations, the queueing models for transaction confirmation times, the Markov decision process formulations for optimal attack strategies — all of them depend, directly or indirectly, on the block-arrival process being Poisson and the individual hash trial being geometrically distributed.

But here is the problem: the hash trial is only approximately memoryless. And the approximation has an expiry date.

The Finite Urn

In 2024, José Parra-Moyano, Gregor Reich, and Karl Schmedders published a paper in Computational Economics that identified something the literature had overlooked. The SHA-256 hash function has a finite domain. A miner trying to find a valid block can vary the 4-byte nonce and the 8-byte coinbase extra-nonce, giving 296 — approximately 79 billion billion billion — possible inputs per block template. That number is enormous. But it is not infinite.

Mining is therefore not sampling with replacement (where the probability stays constant forever). It is sampling without replacement (where every failed attempt eliminates one possibility from the remaining pool). The correct distribution is the negative hypergeometric, not the negative binomial that the literature assumes. The difference is negligible when the number of draws is tiny relative to the urn size. But as hash rates grow under Moore’s Law — doubling roughly every 18 months — the fraction of the urn explored in a single block’s mining period grows too.

The standard rule of thumb in statistics, following Devore and Berk (2012), is that the without-replacement distribution can be approximated by the with-replacement distribution as long as the sample size does not exceed 5% of the population. Once you are drawing more than 5% of the balls from the urn, the approximation starts to break down in ways that matter.

At the current global hash rate of approximately 750 exahashes per second, the network performs about 4.5 × 1023 hash computations during the 10 minutes it takes to mine a block. That is 0.0006% of the 296 restricted domain. Negligible. The Poisson approximation is excellent today.

But project forward. By 2040, the hash rate reaches approximately 768,000 EH/s. The network explores about 0.6% of the domain per block — still small, but entering the range where the departure from the Poisson model becomes detectable. By 2050, at approximately 78 million EH/s, the network explores 59% of the restricted domain in a single block. More than half of all possible input combinations are tried. The memoryless property — the mathematical foundation of every security model in the literature — fails for individual hash trials.

This is not a theoretical curiosity. It means the security calculations that underpin every economic analysis of proof-of-work have a shelf life without high transaction throughput.

The Template Refresh Effect

But the story does not end there. And this is where transaction throughput changes everything.

A block template is defined by its previous-block hash, its set of transactions, and its coinbase output address. The restricted domain of 296 applies to one specific template. When any component of the template changes — crucially, when a new transaction is added to the candidate block — the Merkle root changes, which changes the block header, which means the miner is now hashing in a completely different region of the hash function’s input space. A new Merkle root is functionally equivalent to opening a fresh urn of 296 balls.

Every new transaction arriving in the mempool creates at least one new potential block template. If a protocol processes T transactions per second, then during the 600-second block-mining interval, miners have access to at least T × 600 distinct templates, each with its own fresh 296 domain. The effective domain per block is:

Effective domain = (Templates per block) × 296

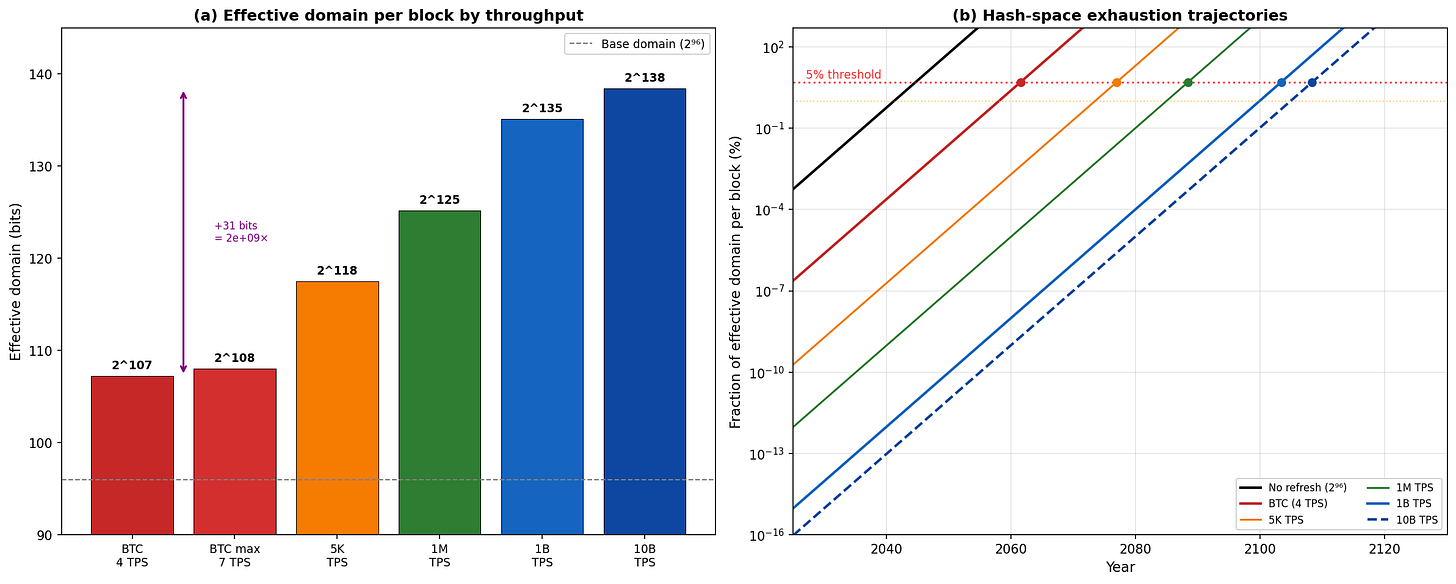

At 4 TPS: 2,400 templates → 2107

At 1 billion TPS: 600 billion templates → 2135

At 10 billion TPS: 6 trillion templates → 2138

This is where protocol design enters the mathematics. The throughput of a proof-of-work protocol is not a market outcome. It is a design parameter, determined by the protocol’s block-size policy and the processing capacity of the node software. The choice of throughput capacity directly determines how large the effective hash domain is, and therefore how long the Poisson approximation remains valid.

Figure 1

Panel (a): Effective domain in bits for different throughput levels. BTC at 4 TPS has an effective domain of 2107 — only 11 bits more than the raw 296. A system at 10 billion TPS reaches 2138 — a factor of 2.6 billion larger. Panel (b): Hash-space exhaustion trajectories over time. Each throughput scenario crosses the 5% Devore–Berk threshold (red horizontal line) at a different date. Higher throughput pushes the crossing decades into the future.

The Numbers

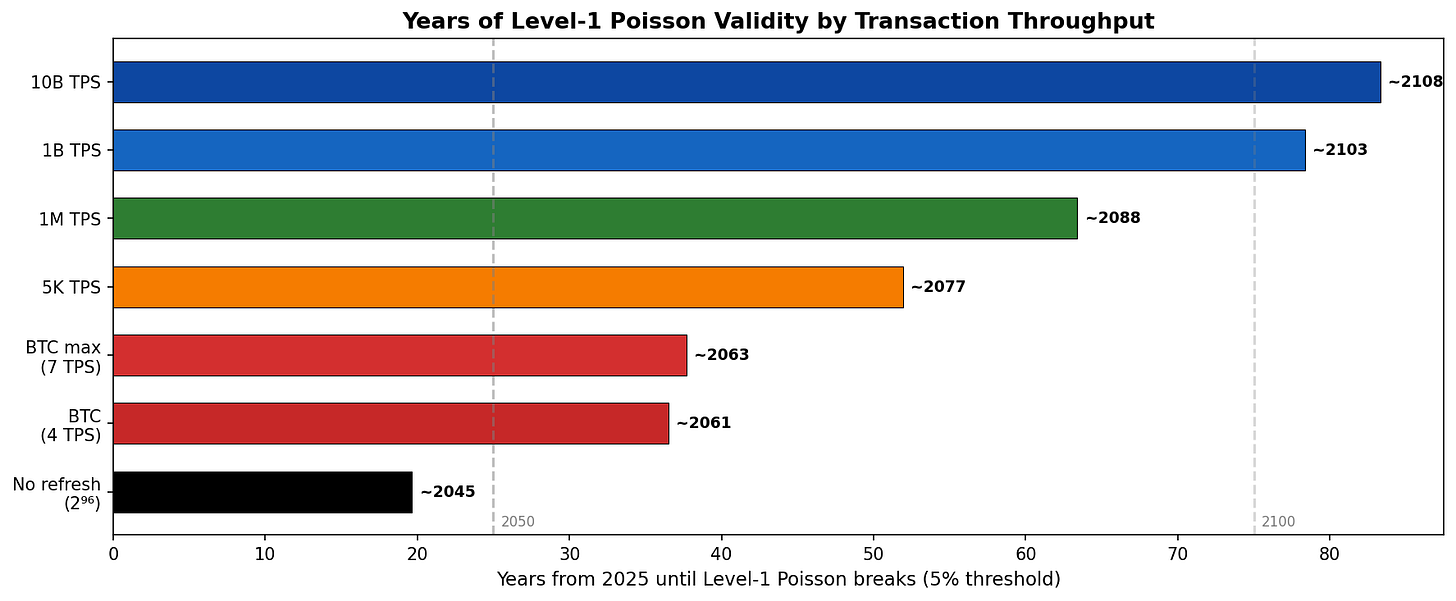

SystemTPSEffective DomainPoisson BreaksMargin vs BTCNo refresh—296~2045−16 yearsBTC (observed)42107~2061baseline5,000 TPS5,0002118~2077+15 years1 million TPS1062125~2088+27 years1 billion TPS1092135~2103+42 years10 billion TPS10102138~2108+47 years

Every tenfold increase in throughput adds approximately 3.3 bits to the effective domain. Going from BTC’s 4 TPS to 10 billion TPS adds 31 bits — extending the Poisson validity by 47 years.

At 1 billion transactions per second, a miner receives approximately 600 billion new transactions per block. Every microsecond, roughly 1,000 new transactions arrive, each enabling a new Merkle root and hence a new template. The template changes continuously; the miner never remains on a single template long enough to explore any measurable fraction of its 296 restricted domain. At 10 billion TPS, the template refresh rate reaches roughly 10,000 new transactions per microsecond. The urn is replaced faster than any miner can meaningfully sample from it.

Even at 2050 hash rates, the fraction of the effective domain explored per block at 1 billion TPS is 9.8 × 10−13. Vanishingly small. The Poisson approximation holds comfortably for over a century.

These throughput levels are not hypothetical. Sustained throughput exceeding one million transactions per second has been demonstrated on adversarial proof-of-work networks using UTXO-based architectures, and architectural modelling supports throughput in the billion-TPS range. Demand at these scales arises from machine-to-machine payments, Internet-of-Things micropayments, supply-chain settlement, and high-frequency data integrity applications — use cases where the volume of individual transactions is large even if the value per transaction is small.

The BTC Problem

Bitcoin’s current throughput constraint — approximately 4 transactions per second, imposed by the 1MB block-size limit — gives it only 2,400 new templates per block. That extends the effective domain from 296 to 2107. Eleven extra bits. A modest improvement.

This is not merely a mathematical inconvenience. It creates a compounding economic problem.

Figure 2

The critical-dates chart shows how many years of Poisson validity each throughput level provides. BTC’s bar ends at ~2061. A billion-TPS system extends past 2100. The gap is 42–47 years of additional validity for the security model that underpins economic analysis of proof-of-work.

The security budget. As Bitcoin’s block subsidy halves every four years (from 3.125 BTC in 2024 toward 0.05 BTC by 2048), transaction fees must increasingly fund the security budget. Budish’s equilibrium constraint requires that the per-block payment to miners is large relative to the value of attacking the system. Under a throughput-constrained protocol, total fee revenue per block is capped: it equals (transactions per block) × (average fee). If the number of transactions is fixed at roughly 4,000 per block, sustaining the security budget requires increasing the average fee. High per-transaction fees risk pricing out users. If users leave, hashrate falls. If hashrate falls, the approach to the Poisson breakdown accelerates.

The feedback loop. Under a high-throughput protocol, the same security budget can be funded through volume: millions or billions of low-fee transactions producing the same aggregate fee revenue without per-transaction cost pressure. A billion transactions at $0.0001 each produces $100,000 per block — comparable to current block rewards — without pricing out any user. The fee-accumulation mechanism that makes the economic environment non-memoryless (block value grows with elapsed time as fees accumulate) operates in both cases, but the sustainability of the required fee level differs fundamentally.

A throughput-constrained protocol faces three reinforcing pressures simultaneously:

Limited template refresh → the Poisson approximation breaks sooner (effective domain 2107 vs 2135+)

Limited fee volume → security-budget pressure as subsidies decline

High per-transaction fees → user-exodus risk → hashrate decline → faster approach to the Poisson threshold

Each channel reinforces the others. A high-throughput system breaks the loop entirely.

What This Means for the Economics of Proof-of-Work

Budish’s central economic insight — that permissionless proof-of-work trust requires ongoing flow payments large relative to the value of attack — is correct. That result does not depend on the Poisson assumption. It follows from the permissionless, anonymous, free-entry structure of the protocol. His one-shot Nash equilibrium argument, which establishes that the block reward must exceed the value of attack scaled by the attack duration, uses no Poisson machinery at all. That is the core of his paper, and it stands.

What does depend on the Poisson assumption is the specific machinery Budish uses to compute attack durations, attack costs, and the zero-net-cost benchmark. His closed-form formulas treat the attack race as a homogeneous birth-death process — honest blocks arrive at one rate, attacker blocks at another, and the expected duration is a first-passage time from a simple random walk. This is valid if and only if the Poisson approximation holds. His Theorem 2 — that the net cost of a majority attack is zero under idealised conditions — is derived within this framework.

The difficulty adjustment mechanism already breaks the Poisson assumption at the protocol level. Every two weeks, Bitcoin updates its difficulty parameter as a deterministic function of past block times. This means the block-arrival rate in the next epoch depends on the history of block arrivals in the current epoch — a direct violation of the memoryless property. Fee accumulation does the same at the payoff level: block value grows with elapsed time as transactions queue in the mempool. Coinbase maturity makes reward realisation depend on whether the block survives 100 confirmations. Heaviest-chain selection makes block validity depend on all subsequent mining. These channels are active now, regardless of throughput.

The finding reported here adds a further dimension: the horizon over which the Poisson approximation holds at the hash-trial level — the most fundamental level, below even the within-epoch block arrivals — is not exogenous. It is a function of the protocol’s transaction throughput. A protocol designer choosing throughput capacity is implicitly choosing the validity horizon of the security model. Budish’s framework treats the stochastic foundation as given. This analysis shows it is a design variable.

The Long View

Satoshi Nakamoto stated that Bitcoin was designed never to hit a scale ceiling. The protocol’s original specification imposed no fixed block-size limit. The mathematics of memorylessness provides an independent reason why throughput matters: it is not merely a question of how many payments the system can handle. It determines how long the mathematical foundations of the system’s security model remain valid.

A throughput-constrained protocol at 4 TPS reaches the Poisson breakdown for individual blocks by approximately 2061. A protocol operating at 1 billion TPS extends that date to 2103. At 10 billion TPS, the effective hash domain is so large that the Poisson approximation holds comfortably past 2108.

The difference is 47 years. That is not a rounding error. It is the difference between a security model that expires within the operational lifetime of infrastructure being built today, and one that remains mathematically sound for the rest of the century.

Consider what 2061 means in practical terms. It is 36 years from now. Mining facilities built today with 20-year site leases will still be operating. Institutional investors evaluating proof-of-work as an asset class over a multi-decade horizon need to know whether the mathematical model underpinning the security analysis remains valid over that horizon. If the answer is “the model breaks within the facility’s operational lifetime under a constrained-throughput protocol, but holds for the entire century under a high-throughput protocol,” that is a material input to the investment decision.

Miners commit capital measured in billions of dollars, with hardware lifetimes of 2–4 years and power-purchase agreements spanning 3–5 years. They operate in a protocol environment whose difficulty adjusts every two weeks and whose subsidy halves every four years. The stochastic foundations on which their investment decisions rest should not have an expiry date that arrives before the infrastructure pays for itself. Whether those foundations hold depends on a protocol design choice: how many transactions per second the system is built to process.

None of this is speculation. The hash domain is finite — that is a mathematical fact about SHA-256. Moore’s Law may slow, but the trajectory is monotonically upward. The halving schedule is hard-coded into the protocol. The fee-accumulation mechanism is a consequence of mempool dynamics. The difficulty adjustment is specified in the protocol’s source code. Every component of this analysis follows from the protocol’s own specification combined with the mathematical properties of the hash function it uses.

The mathematics is clear. The design choice is the protocol’s.

Trying to learn here.

Can the miners on the low TPS network not just get a new template in other ways?

eg:

- change tx ordering

- switching coinbase

- add dummy txs

Or would that be to expensive of a change to their setup?